Beyond the mean

March 5, 2015

Since launching our data science bootcamp, we’ve started to do quite a bit of data literacy training to help organizations improve their ability to “think like a data scientist.” In the spirit of our hipster classifier—a simple game that introduces machine learning, without any computers or equations—we have been developing similarly interactive sessions to introduce concepts like data visualization, experimental design, etc. without all the technical detail that practitioners need to know on a day-to-day basis. For a recent data literacy training workshop, we developed a session that was intended to help participants appreciate more sophisticated means of analyzing data, beyond just taking the average of a bunch of numbers in a spreadsheet.

To that end, we created an activity that involved rolling dice so that participants could learn how to tell whether the distribution of numbers from their dice were, in fact, the same or different. We wrapped the whole exercise around a dice game where the winners were, naturally, rewarded with chocolate.

“Yeah, yea, yah. I’ve heard of using dice games to learn about statistics before. So what?”

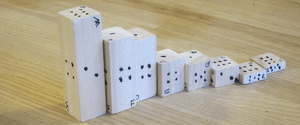

In our case, we developed dice that were clearly biased so that anyone looking at the dice could clearly tell that the dice should give different results, even if the data don’t show it yet. That is, we created our own weighted dice:

In the movies, the canonical weighted die looks normal, but has lead weights inside one side. While this flavor of weighted die might be great for capturing the imagination, it is not terribly conducive for teaching statistics. It isn’t obvious how putting additional mass on the 1, for example, might lead to more rolls of a 6. It’s often just confusing.

So we fabricated our own dice from 1”x1” rectangular wood rods that you can buy from just about any hardware store. Rather than cutting all of the dice to be perfect 1”x1”x1” cubes, we cut them at random heights so that some were as small as 5/16” tall whereas others were over 3” tall. We then labeled the dice in the usual way, that is, so that opposite sides would always add up to 7. In addition, we made sure that the square sides of the dice were always labeled with either a 1 or a 6.

Participants were asked to roll their dice 10 times and record the results in a spreadsheet. An interesting property of weighted dice like this is that the theoretical average roll of these dice is always 3.5. So when participants just looked at the mean of the dice, all of the dice appeared to be the same even though they were clearly different. This is an important lesson for anyone inclined to work with data.

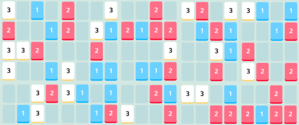

We then revealed the next tab of the spreadsheet to look at the distributions of the different dice. After only 10 rolls, all of the dice had distributions that appeared extremely different, but were they? How do you know?

To answer these questions, we invited the participants to roll their dice 20 more times to generate a total of three different samples of 10 rolls each. In the next tab of the spreadsheet, we visualized how these three samples had slightly different frequencies of the various numbers appearing. That is, the fact that we were only sampling 10 rolls meant that we had quite a bit of uncertainty around the true frequency that we might expect. This is the origin of sampling error.

We then revealed the final tab of the spreadsheet to show the participants the error bars on each of the dice to bring up the concept of statistical significance. Whenever the error bars of the different dice overlapped, you could not invalidate the possibility that these dice are, in fact, the same. As a final activity, we encouraged students to continue rolling the dice until they could be confident that the dice were different. By increasing the amount of data they collected, participants increased the statistical power of their conclusions.

The whole session took about 1.5 hours after taking time for questions and was a really fun way for participants to start to think beyond your average average (pun intended).